First of all, I'm not a computer scientist and definitely not an AI researcher, but I do read about this topic quite a decent amount. I'm probably going to get downvoted to oblivion, but this is a very legitimate question that I have been curiously rolling in my mind for quite a while. Correct me if I'm wrong, but this is my understanding of how AI systems like GPT-4 are developed:

- The research team begins by analyzing the weights of the previous model. They observe this past architecture and see what they did right and what they did wrong. Based on these insights, they modify the model, experiment with different optimizations or even incorporate new breakthrough algorithms and advanced data processing techniques.

- After integrating a variety of new technologies, the team does that major very expensive training run that everyone talks about where they bombard the new model with an unimaginable amounts compute and data for many many months straight. The goal here is that the newly developed model significantly surpasses its predecessor, which they can then release to the market to make a profit.

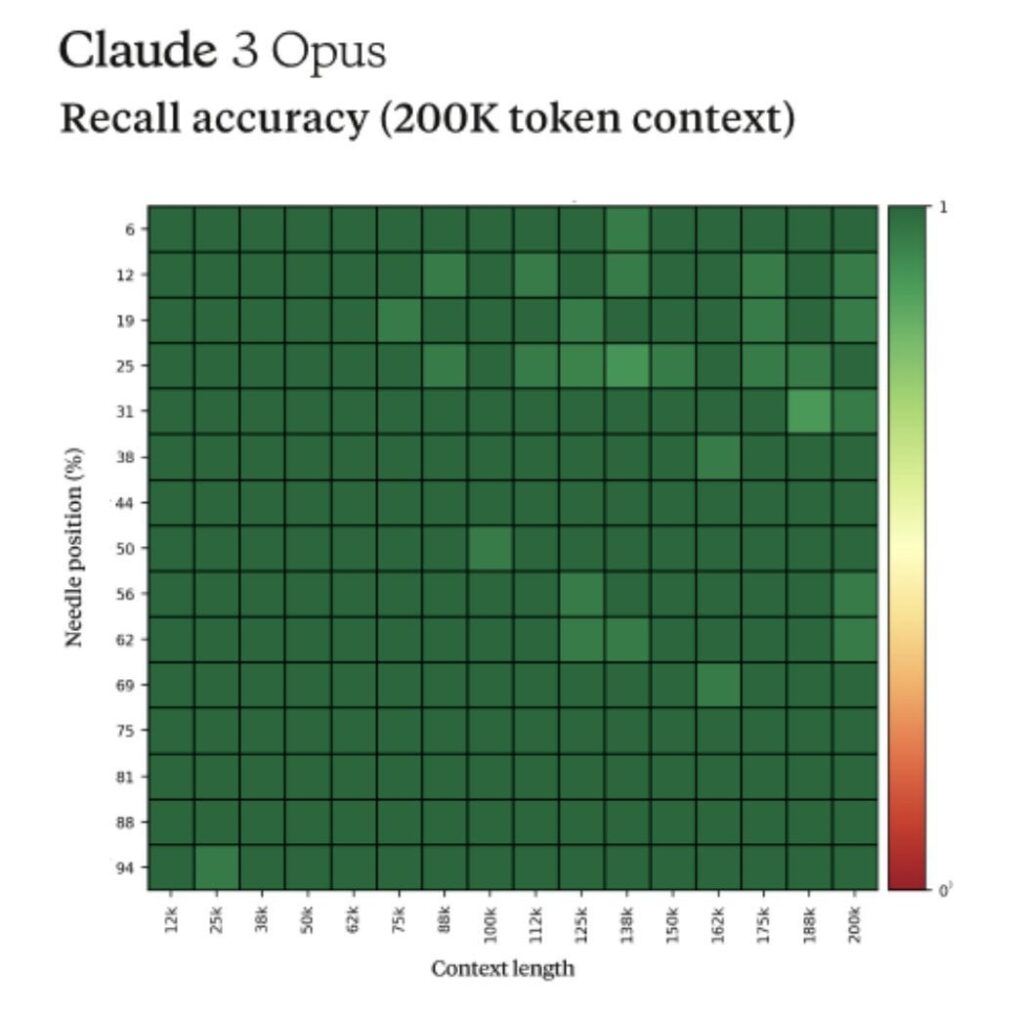

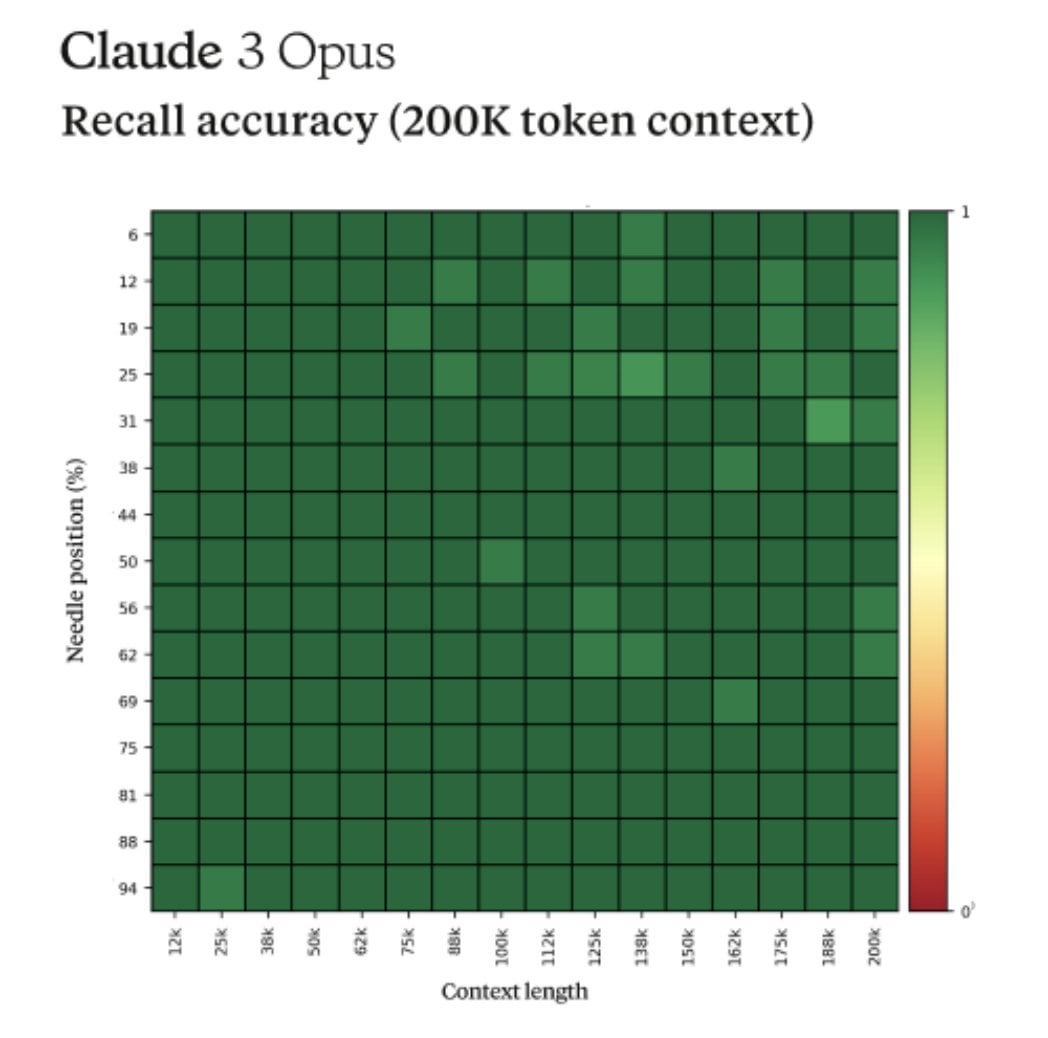

- Once the training is complete it is prompted by the team, heavily tested for safety (whatever that means), researched and then eventually released to the public. It is during this second and primarily the final phase that researchers often observe a range of emergent properties, like contextual awareness in GPT-4 or meta awareness in Claude 3 Opus (remember that viral needle in a haystack example).

Now my question here is, if the emergent behaviors get discovered this late into the cycle without any sort of protections placed onto the system from the very beginning, isn't it pretty logical to assume that if one day something clicks, and GPT-20 "wakes up", getting what many researchers call emergent agency, we essentially have nothing to protect ourselves from that?

If these properties are discovered in such later stages, a possible rogue AGI already has time to have its way. We know that machines are at least 10000x faster than the human brain, so making a quick plan that is a million steps ahead of humans would be rather simple, no? It wouldn't do this out of malevolence or because it hates humans, but it would do it to maximize its reward function and to prevent anything from getting in its way from doing that. As far as I'm aware the alignment teams can't really help us here since we know from safety experts themselves that the field is far behind capabilities research with an increasingly widening gap and almost no funding…

What I'm trying to say here is that if we don't have any "boxes" or "alerts" that warn us during the training run saying "hey, maybe there is something going on here that we should be careful about", aren't we essentially just YOLOing it? One day we WILL discover some sort of an algorithm or a final piece of the puzzle that will lead to what computer science has been dreaming of since the very beginning.

I'm obviously treading in the science fiction territory here and I probably misunderstand to a great extent how all of this works, but I think the core question is legitimate.

Give me your best counter arguments, tell me how stupid I am and explain to a non techie like me what the plan seems to be.

https://old.reddit.com/r/Futurology/comments/1d9s9rb/there_is_nothing_that_we_can_do_to_prevent_agi/