We implemented two identical experiments in which we randomly invited survey participants before or after a FFF protest and then compared beliefs, attitudes, and behavioral intentions related to climate policy17,18. As is common in many political science and sociology departments in Germany, the ethical evaluation of the study was conducted within the departments of the first author. Since no concerns were expressed, an external ethical review was waived. The study was conducted in full accordance with the Declaration of Helsinki. All participants were informed about their rights and the content of the study. They gave written informed consent.

Sample and procedure

We draw on a non-probability sample. Participants came from a pool of people recruited for an earlier study. The recruitment for the initial study (not reported here) had happened seven weeks earlier via advertisements on Twitter and Facebook19. The ads specifically targeted people interested in migration, climate, and housing – three of Germany’s most prevalent political issues at the time. The ads were formulated to ensure that people from the whole opinion spectrum participated. Individuals were routed to a survey hosted on an external server via a link. Participants were filtered out if they reported having no interest in any of the three topics. After completing this earlier study, they could leave their email addresses if they wished to be contacted for future studies.

We invited all contacts in this pool via email to participate in the current experiments. We randomly split the list of email addresses (N = 2643). To the first half of the email addresses, we sent the invitation to our online surveys on Thursday at midnight before the FFF demonstration (i.e., September 23, resp. October 21, 2021, at 12:01 a.m.). The second half of email addresses received the invitation on Saturday at midnight after the protests (i.e., September 25, resp. October 23, 2021, at 12:01 a.m.). On each day, we sent out two reminders and emphasized the importance of participating during the day. Surveys were closed at 12:00 a.m. We consider those who answered our questionnaire on the day before the respective demonstrations, the control group, and those who responded on the day after the respective protest as our treatment group. This definition results in the following sample sizes: pre-election experiment Treatment = 565; pre-election experiment Control = 603; post-election experiment Treatment = 473; post-election experiment Control = 561.

When inviting participants, we had to strike a delicate balance: The questionnaire’s focus was solely on the FFF, and as such, we assumed that we would have lost participants’ trust if we pretended it was about another topic. On the other hand, we had to avoid the potential participants not interested in (or opposed to) the climate movement, systematically opting out of participation after reading the invitation. As such, we used generic formulations in the invitation emails and the consent that only mentioned the protest en passant and did not convey any information about the nature or size of the protest (see Supplementary Table 11).

Sample characteristics can be found in Supplementary Table 1. We achieved satisfying variance in socio-demographics; however, due to our recruiting strategy, people with above-average political interest, opinion strength, and activism are overrepresented in our sample. The high levels of political interest are advantageous for our design as they ensure that participants have heard about the FFF protests, which made headlines for days. Notably, the sample is relatively diverse regarding opinions on climate policies, ranging from FFF activists and sympathizers to opponents to undecided or uninterested bystanders (i.e., participants interested in one of the other two topics).

Finally, even though we randomly split the list of emails before each protest, we invited participants from the same pool so that 1218 respondents participated in both surveys. We statistically controlled for the influence of double participation (see below).

Measures

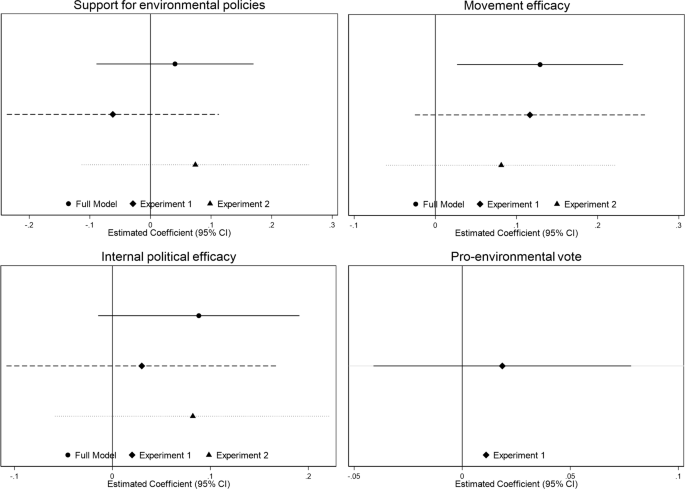

After giving informed consent, we informed participants that the FFF movement called for a national climate strike. Then, participants answered several questions. First, as a treatment check, we asked whether participants had heard about the (call to) protest. Second, we assessed support for environmental policies by asking participants for their agreement with the statement that the ‘national government should do anything to stop climate change [Die Bundesregierung sollte alles dafür tun, um den Klimawandel aufzuhalten].’ Third, we measured perceived movement efficacy by asking for agreement with the statement that ‘the climate movement will have an impact with this campaign [Die Klimabewegung wird mit dieser Aktion etwas bewirken]. “Fourth, we measured perceived individual political efficacy by asking for agreement to the statement that ‘either way, people like me have no influence on what the government does [Leute wie ich haben so oder so keinen Einfluss darauf, was die Regierung tut].” We inverted this variable so that higher agreement implies higher efficacy. All three items’ answers ranged from 1 “completely disagree” to 5 “completely agree.” Finally, we measured vote for a pro-environmental party by asking participants to whom they would vote in the national election. Answer options included all parties in the parliament and an option for all other parties, non-voters, and non-eligibility. We recoded the variable into a binary variable to contrast voting for the pro-environmental Greens or all others.

Analyses

We employ linear regression models and interpret the mean differences between treatment and control groups. Main models can be found in Supplementary Tables 2–5. Since the vote choice lay in the past for all participants at the time of the second protest around the coalition negotiations, we only test the effects on this variable for the pre-election experiment. We ran separate analyses for the other three outcomes: For the pre-election experiment and the post-election experiment, and for both experiments pooled. For the latter models, we clustered standard errors on the individual level to account for repeated observations. The models produced similar substantial results, so we report the results from the pooled experiments, as those models have the highest statistical power.

The treatment was successful, as almost all participants in the post-protest sample had heard about the event (first experiment: post-protest 95% vs. pre-protest 88%; second experiment: post-protest 84% vs. pre-protest 63%). Although we randomly allocated respondents into treatment and control groups, the final sample composition of both groups in the second experiment shows signs of selectivity, most likely due to nonresponse. To identify systematic differences between the treatment group and the control group, we employed stepwise logistic regression analyses and stepwise excluded variables that could not significantly explain differences between the two groups. We added all variables that significantly explained differences between the groups as control variables in our main models (i.e., household size, frequency of political engagement, and position on climate politics; see also Supplementary Note 1).

Finally, we conduct a moderation analysis to test whether the effects markedly differ for people with a particular interest in climate and the environment. For this analysis, we interacted the filter variable from the baseline study, interest in climate vs. the other two issues, with the treatment and re-ran all models (see Supplementary Tables 6–9).

For all analyses, we base our interpretation on two-sided unadjusted p values (α = 0.05; see also Supplementary Note 2).