In a bid to solve the power, land, and cooling crunch facing AI infrastructure, Aikido Technologies is building data centers directly into floating offshore wind platforms. The company says a proof-of-concept unit will be installed in Norway later this year, with its first commercial project targeted for the UK in 2028, positioning compute where renewable energy, seawater cooling, and space are most abundant.

How the Sea Becomes a Server Room for AI Workloads

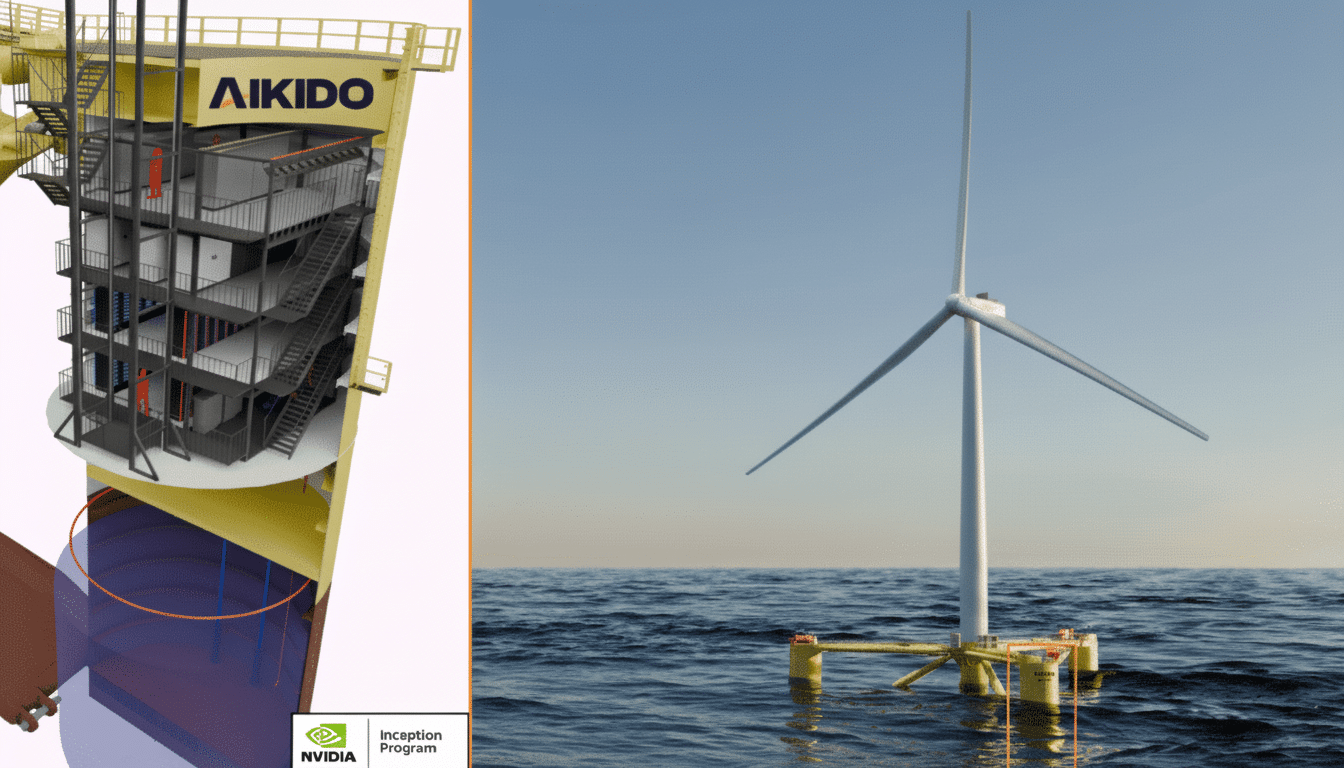

Aikido’s design pairs a floating wind turbine substructure with a sealed data center enclosure and integrated battery storage, all within a single steel unit that can be prefabricated and assembled dockside. By co-locating compute with generation, the platform aims to minimize grid interconnection delays, use the ocean as an efficient heat sink, and deliver modular capacity that can be scaled in clusters.

The enclosure is purpose-built for AI-grade compute, where power density and heat loads are rising rapidly. Seawater-assisted heat exchange can slash cooling energy overhead, a core driver of Power Usage Effectiveness (PUE), while onboard batteries buffer wind variability and provide ride-through for transient faults. The result is a self-contained microgrid for high-value workloads.

Why Go Offshore for Data Centers, and Why Now

Data center power demand is surging alongside generative AI and cloud growth. The International Energy Agency estimates data centers consumed roughly 460 TWh in 2022 and could exceed 1,000 TWh by 2026 if current trends persist. In several markets, grid congestion and land scarcity are already gating development; Ireland’s Central Statistics Office reported that data centers accounted for 18% of the country’s electricity use in 2022, sparking tighter connection rules and curtailments.

Offshore wind offers a counterweight. Mature bottom-fixed farms and an accelerating pipeline of floating projects provide steady capacity factors—often 40–60%—and a pathway to large-scale additions near coastal demand centers. By moving compute offshore, operators can avoid costly land acquisition, reduce freshwater use for cooling, and tap battery-backed renewables without long waits for substation upgrades.

Engineering Hurdles and Ecological Considerations

Operating servers at sea is not trivial. Designers must contend with wave loads, mooring dynamics, corrosion, and salt ingress over multi-decade lifecycles. Classification bodies such as DNV provide standards for floating structures and maritime power systems, but the combination of high-density electronics and offshore wind hardware introduces new integration and maintenance challenges.

Thermal discharge is another concern. Seawater cooling concentrates waste heat locally, which requires careful modeling, diffuser design, and monitoring to protect marine ecosystems. There is precedent that the ocean and compute can coexist: Microsoft’s Project Natick trial off the Orkney Islands found the sealed underwater server capsule had significantly lower component failure rates than land-based counterparts and accumulated marine life on its exterior, suggesting limited ecological disruption under the test conditions. In 2023, municipal authorities in Shanghai backed an initial phase of an underwater data center project, underlining global interest in ocean-based computing.

Connectivity, Cooling, and Workloads at Sea

Fiber backhaul remains the lifeline. Many offshore wind zones sit near subsea cable landing points or coastal network hubs, enabling low-latency links to users and other data centers. Latency added by tens of kilometers of undersea fiber is measured in fractions of a millisecond, negligible for most cloud and AI workloads when designed properly.

Cooling at sea can be exceptionally efficient. Direct seawater heat exchangers or closed-loop systems that reject heat via seawater drastically reduce mechanical cooling. For compute, that opens doors to denser racks and liquid-cooled accelerators. Meanwhile, onboard batteries can shape the power profile—smoothing short-term wind variability and allowing operators to schedule batch AI training or background processing when generation is highest, reserving steady battery-backed power for latency-sensitive inference.

Permitting Requirements and the Path to Scale

Regulation will define the pace. In the UK, seabed leasing is overseen by The Crown Estate and Crown Estate Scotland, while environmental assessments involve the Marine Management Organisation and related agencies. Norway has designated areas such as Utsira Nord for floating wind, providing potential sites for pilots. Any co-located data center will need approvals covering energy generation, thermal discharge, navigation safety, and cable routes.

Commercial models could evolve. One route is self-consumption, where the platform’s wind generation primarily powers its compute, supplementing from the grid as needed. Another is hybrid participation—selling excess power via power purchase agreements while running the data center as a controllable load. Either way, integration with national grids and marine spatial planning will demand close coordination with transmission operators and maritime authorities.

What to Watch Next as Ocean Data Centers Emerge

Aikido’s Norway proof-of-concept will be scrutinized for real-world PUE, uptime through storms, maintenance access strategies, and the effectiveness of its battery and thermal management. If results track with expectations—and if permitting pathways solidify—the company’s 2028 UK target could mark the first commercial step toward a new class of ocean-native data centers.

The pitch is simple but ambitious: bring compute to the clean power source, not the other way around. With AI accelerating and grids straining, the sea may become the most practical place to cool the hottest chips.