In January, OpenAI said 40 million people asked ChatGPT health-related questions each day.

Max Davis

PADUCAH — In January, OpenAI said 40 million people asked ChatGPT health-related questions each day. However, a local doctor — along with a western Kentucky resident — said using artificial intelligence for medical advice can lead to misinformation.

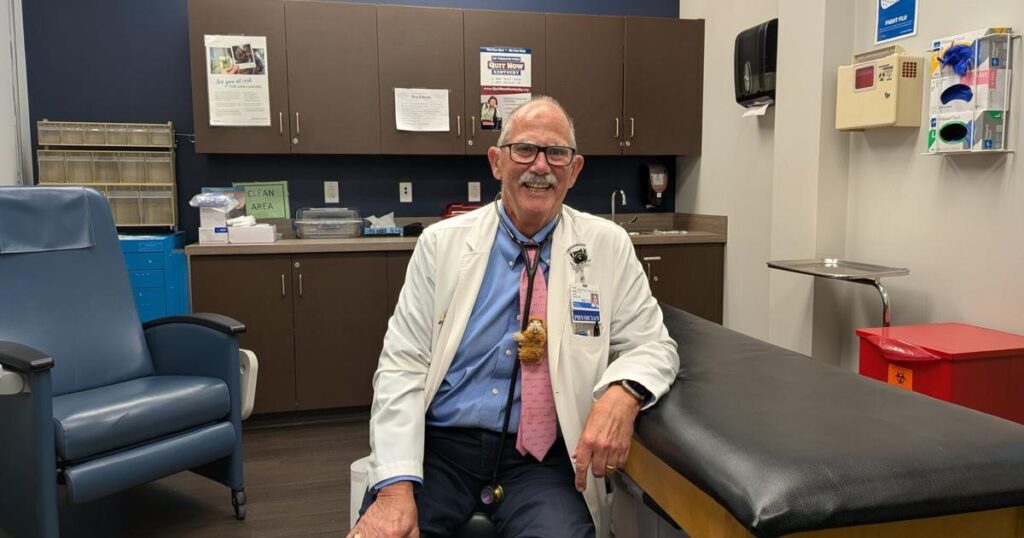

John Cecil, a primary care doctor at Baptist Health Paducah, said he has not been impressed with AI’s responses to healthcare-related questions. While the answers are quick, he said medical diagnoses are complicated and AI often misses the nuances.

“We had a patient who had AI look into her left shoulder pain. Her shoulder hurt, and AI was like, ‘It could be trauma, it could be arthritis,’” Cecil said.

However, Cecil said the pain wasn’t caused by either — it was actually due to heart disease, and there were more symptoms beyond just the left shoulder pain.

“If they had put shortness of breath, difficulty walking up steps, and left arm or shoulder pain, then yeah, you would have gotten a message that says heart attack,” Cecil said. “But they didn’t put that in, so there was a delay there in care.”

John Cecil, a primary care doctor at Baptist Health Paducah, said he has not been impressed with AI’s responses to healthcare-related questions.

Max Davis

Cecil explained that AI could have given the correct answer if the patient had included all the details of their symptoms. However, some important details are often only noticed by a trained professional. When these details are missing, it can result in misdiagnosis or incorrect advice. Cecil advises people to see a healthcare professional, who can identify subtle clues that AI might overlook.

“(Some conditions) sound exactly the same when the patient tells you about it, but then there are certain little things you learn that could be the differential, called differential diagnosis,” Cecil said. “It’s probably a sore throat, but here are four or five other things it could be, and then you want to explain to the person what you’re going to do about it.”

Cecil said AI is used in the office to help track patient visits and for research, but he does not believe it’s ready for full use in medical advice.

“I am not trying to throw smoke at anybody who’s using AI, because I know they are going to do it, but don’t make any critical decisions based on it,” Cecil said.

Keith Hoffman, a western Kentucky resident, said he used AI in the past for medical questions but found it inaccurate.

Max Davis

Keith Hoffman, a western Kentucky resident, said he used AI in the past to help research what a gastric bypass followed by a gastric sleeve would mean for his wife. Originally starting with a Google search, Hoffman eventually shifted to multiple AI chatbots to get more information quickly.

The information, while plentiful, was partially refuted by her surgeon and doctor, according to Hoffman.

“Some of the information, especially about the pre-surgery, was inaccurate,” Hoffman said. “I’m comparing that to what her surgeon and physician actually said, and (AI) told us to do certain things that were allowed when they were not allowed.”

“I think for some things it can be good, but for medical advice, it is not,” Hoffman continued.