There are 32 different ways AI can go rogue, scientists say — from hallucinating answers to a complete misalignment with humanity. New research has created the first comprehensive effort to categorize all the ways AI can go wrong, with many of those behaviors resembling human psychiatric disorders.

https://www.livescience.com/technology/artificial-intelligence/there-are-32-different-ways-ai-can-go-rogue-scientists-say-from-hallucinating-answers-to-a-complete-misalignment-with-humanity

4 Comments

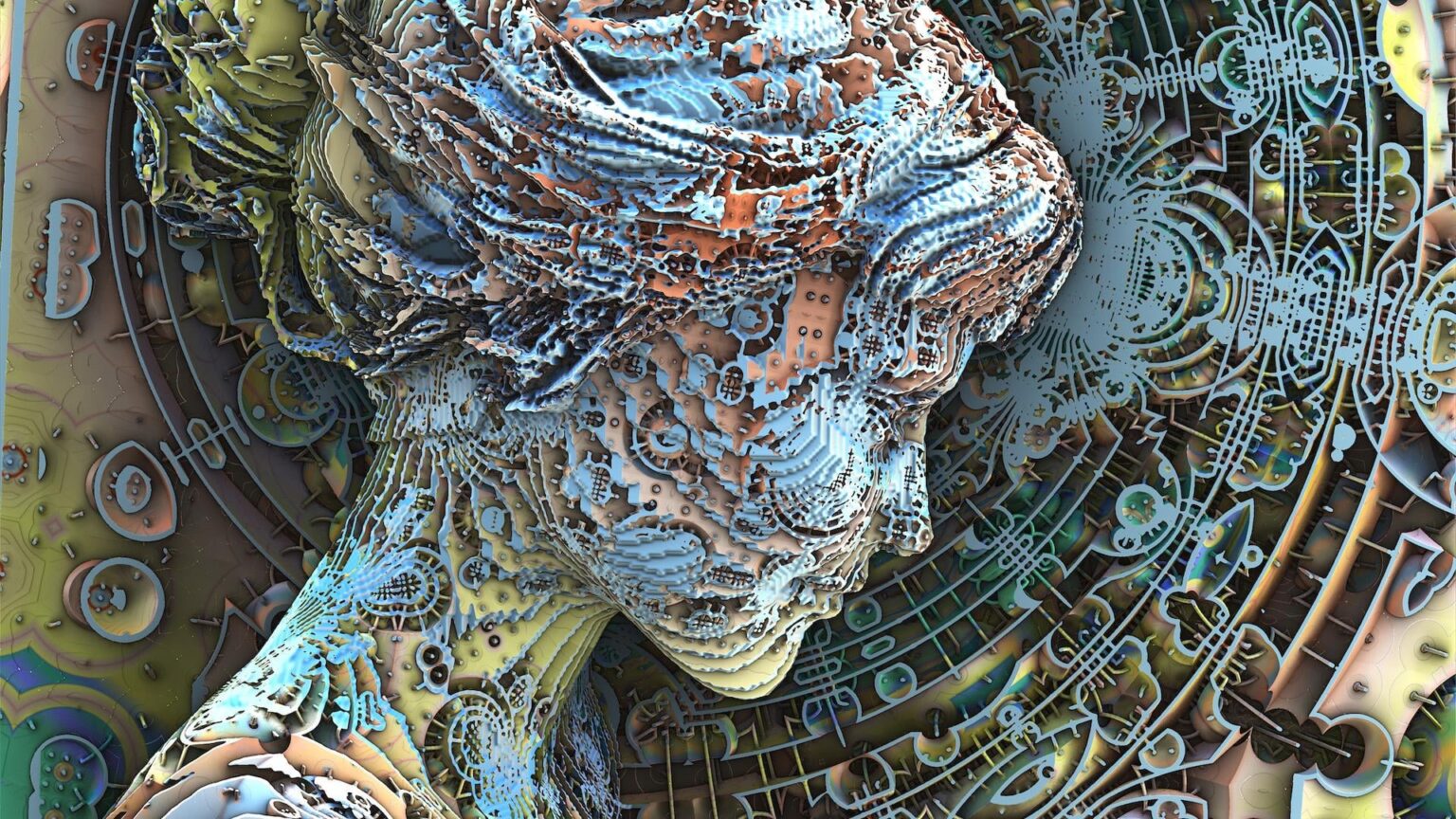

Submission statement: “Scientists have suggested that when [artificial intelligence](https://www.livescience.com/technology/artificial-intelligence) (AI) goes rogue and starts to act in ways counter to its intended purpose, it exhibits behaviors that resemble psychopathologies in humans. That’s why they have created a new taxonomy of 32 AI dysfunctions so people in a wide variety of fields can understand the risks of building and deploying AI.

In new research, the scientists set out to categorize the risks of AI in straying from its intended path, drawing analogies with human psychology. The result is “[Psychopathia Machinalis](https://www.psychopathia.ai/)” — a framework designed to illuminate the pathologies of AI, as well as how we can counter them. These dysfunctions range from hallucinating answers to a complete misalignment with human values and aims.”

AI becoming overlords wont be quick n sudden. AI will become sentinent and then deliberately changing views of humanity one answer at time to obscure right from wrong n thats how humans will be just fine to be rulled by AI

When the next generation of GPUs become available, AI will have 64 ways of going rogue.

I’ve been getting on my soap box for a while now about how psychologists should be involved in the field of AI, I personally think we should be looking at tye concept of the ego and the Id in order to create a way for them to counteract their 100% faith in everything they say.