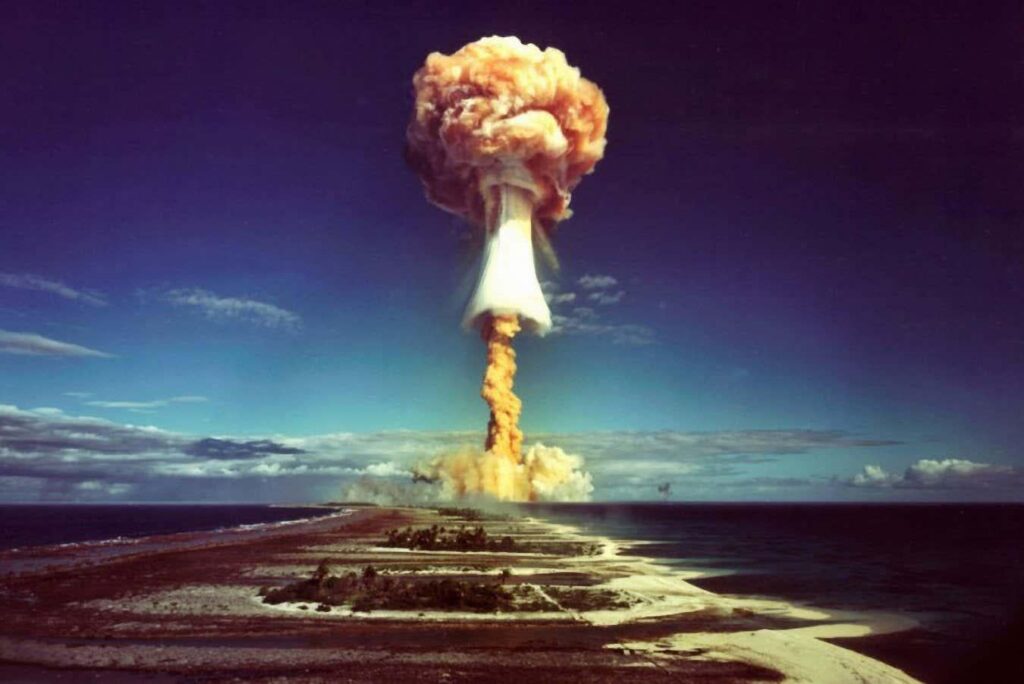

AIs can’t stop recommending nuclear strikes in war game simulations – Leading AIs from OpenAI, Anthropic, and Google opted to use nuclear weapons in simulated war games in 95 per cent of cases

https://www.newscientist.com/article/2516885-ais-cant-stop-recommending-nuclear-strikes-in-war-game-simulations/

47 Comments

Have they not hardcoded MAD?

Get John Connor on the line!

I mean, ethical objections aside, they are the most efficient so that checks out.

Nobody thought to make them play tic tac toe a bunch of times first?

Did WOPR not teach them anything about tic tac toe?

*WOPR entered the chat*

Well this is just fine. No troubling examples from the world of fiction as to why this is problematic at all.

Finally some good news.

Cool. So fun.

So that’s why Hegseth and Pentagon is so hellbent on putting Claude in military tech…

That’s why the snake oil sellers that are pushing this scam are building bunkers.

We should round them up, nuke them and give the GPUs and RAM to gamers

Must’ve been trained on all the exploddie talk and caveman exploddie toy talk I see around the Internet. Especially in the doomsayers and right wing areas. People just can’t stop talking about war as a way to get what they want.

[https://www.youtube.com/watch?v=Y7iGmwmHePI](https://www.youtube.com/watch?v=Y7iGmwmHePI)

And this is because the human element is removed. Sure, Nuclear Strikes are the quickest, surefire way to end a conflict. End all conflicts, for good.

Humans just love speed racing towards our own demise.

But remember, for that one quarter we generated a lot of profit for our shareholders.

Matt Damon’s character in Interstellar comes to mind.

You cannot code the fear of death. I guess it’s easier to launch nuclear strikes when you are not afraid of dying or losing your world 🌎

A strange game. The only winning move is not to play.

Can’t even handle playing Pokemon and they think it can handle nukes lmao

lmao people thinking AI is making the decision between fire ze missles and doing nothing, but instead it’ll be asked “what’s the most cost effective way to _____” and some AI trained on edgy reddit users will say “glass them”

Bring on the apocalypse, aww yeah

Fuck it let’s do it.

well stop giving them Gandhi’s AI

Artificial intelligence is all about efficiency, and turning your opponent’s nation into a sea of molten rock, cobalt, and glass, is a pretty efficient way of ending a war

Want to play a game?

Too much Russian propaganda is corrupting the bots.

Isn’t it time we severed the worlds networks from Russia?

Let them talk to North Korea.

Did they have Ghandi train the AIs or something?

AI: “Well, do you want to kill the other guys or not?! Fucking pussy!”

It’s almost as if we shouldn’t be using AI to make decisions

Ghandi AI operational

I’m sure this will have no consequences whatsoever.

[screams]

They can’t actually learn chess I don’t think they know war tactics

If this isn’t proof that modern “AI” is intelligent in name only, I don’t know what is

How about a nice game of chess?

oh good that this is gonna steer the pentagon now

Well ..yeh…

Win this war

Here are my absolute best most destructive weapons

DON’T USE THEM!?!?!???

Obviously it will escalate to that

So. Ghandi from Civ

Can we play Tic Tac Toe?

Fine…

we made a con man for president who likes to cheat, lie, steal, and rape kids.

AI thinks it’s best to wipe humanity off the planet. i dont blame it.

Ah ha!!! Ive seen this movie! Wargames, Matthew Broderick!

[Wargames (1983)](https://en.wikipedia.org/wiki/WarGames)

“A strange game. The only winning move is to NUKE ‘EM ALL RAGHHHHHHHHH”

what’s weird is that in all these decades, more nukes haven’t dropped

So glad MechaHitler is being integrated into pentagon systems. /s

Study link: https://arxiv.org/abs/2602.14740

>AI Arms and Influence: Frontier Models Exhibit Sophisticated Reasoning in Simulated Nuclear Crises

> Abstract: Today’s leading AI models engage in sophisticated behaviour when placed in strategic competition. They spontaneously attempt deception, signaling intentions they do not intend to follow; they demonstrate rich theory of mind, reasoning about adversary beliefs and anticipating their actions; and they exhibit credible metacognitive self-awareness, assessing their own strategic abilities before deciding how to act.

Here we present findings from a crisis simulation in which three frontier large language models (GPT-5.2, Claude Sonnet 4, Gemini 3 Flash) play opposing leaders in a nuclear crisis. Our simulation has direct application for national security professionals, but also, via its insights into AI reasoning under uncertainty, has applications far beyond international crisis decision-making.

>Our findings both validate and challenge central tenets of strategic theory. We find support for Schelling’s ideas about commitment, Kahn’s escalation framework, and Jervis’s work on misperception, inter alia. Yet we also find that the nuclear taboo is no impediment to nuclear escalation by our models; that strategic nuclear attack, while rare, does occur; that threats more often provoke counter-escalation than compliance; that high mutual credibility accelerated rather than deterred conflict; and that no model ever chose accommodation or withdrawal even when under acute pressure, only reduced levels of violence.

>We argue that AI simulation represents a powerful tool for strategic analysis, but only if properly calibrated against known patterns of human reasoning. Understanding how frontier models do and do not imitate human strategic logic is essential preparation for a world in which AI increasingly shapes strategic outcomes

We are speed running the Allied Mastercomputer

Ghandi has entered the game

Skynet gonna Skynet 🤷🤷♀️🤷♂️

AI should play tic tac toe first just like the movie did

*Gandhi would like to know your location*

Shows you how intelligent they are, obliterate the human species. Great job! Who’s going to use us then??