What are the risks and benefits of ‘AI agents’? – By 2027, half of companies that use GenAI will have launched ‘agentic AI’, also known as an AI agent, according to Deloitte.

https://www.weforum.org/stories/2024/12/ai-agents-risks-artificial-intelligence/

6 Comments

From the article

>By 2027, Deloitte predicts that half of companies that use generative AI will have launched [agentic AI pilots or proofs of concept](https://www2.deloitte.com/us/en/insights/industry/technology/technology-media-and-telecom-predictions/2025/autonomous-generative-ai-agents-still-under-development.html) that will be capable of acting as smart assistants, performing complex tasks with minimal human supervision.

>On the verge of this technological leap, it’s important to understand what AI agents are, their potential impact, and how to navigate the associated risks.

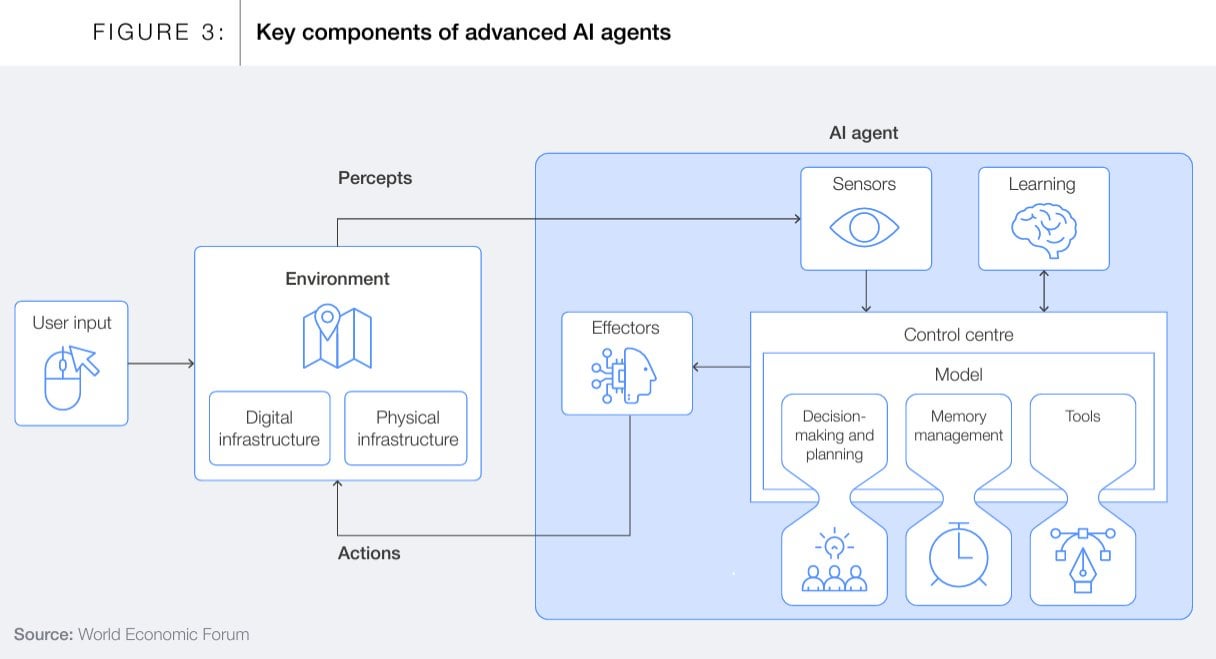

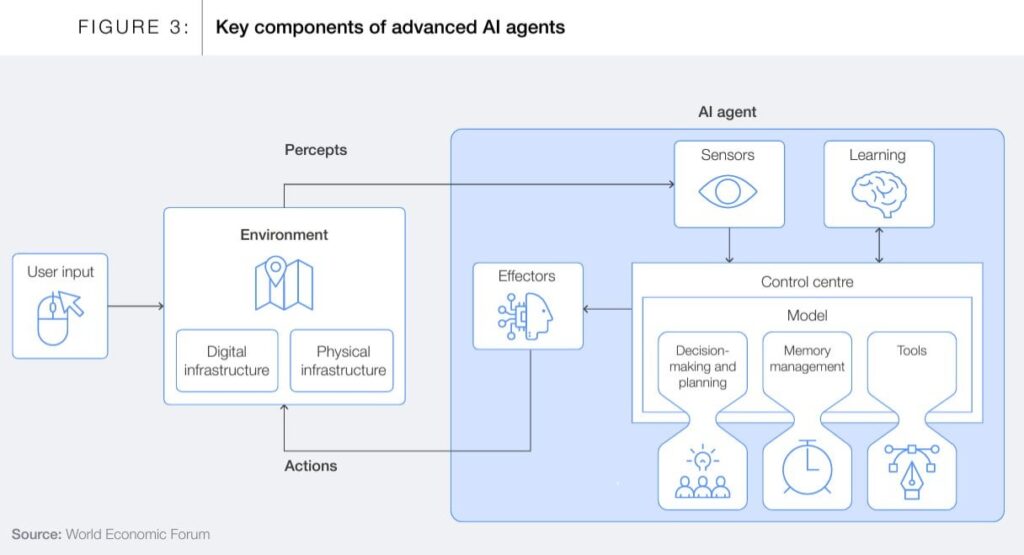

>In collaboration with Capgemini, the World Economic Forum has published a new white paper, [*Navigating the AI Frontier: A Primer on the Evolution and Impact of AI Agents*](https://www.weforum.org/publications/navigating-the-ai-frontier-a-primer-on-the-evolution-and-impact-of-ai-agents/), which explores the capabilities and implications of AI agents, to provide stakeholders with a better understanding of how these systems can drive meaningful progress across sectors.

Deloitte does some interesting writeups so does Gartner:

Spatial Web overview:

https://www2.deloitte.com/content/dam/insights/us/articles/6645_Spatial-web-strategy/DI_Spatial-web-strategy.pdf

It’s usually a sure sign the opposite will happen if large consultancy companies make long-term predictions.

Anyone remembers the Metaverse? It was predicted to be a multi-billion-billion-million venture by yesterday by McKinsey.

Many companies are already offering AI Agents to their end users. check [AgentForce from SalesForce](https://bestaiagents.ai/agent/agentforce)

Been experimenting with AI agents lately and it’s fascinating to see how they’re evolving! While there are valid concerns about AI autonomy, I’ve found they can be incredibly helpful for repetitive tasks when properly configured. Recently tried opencord for managing some basic workflows – it’s surprisingly intuitive and has good safety controls. The key is starting small and gradually expanding use cases as you build trust. Curious what safeguards other companies are putting in place as they adopt these tools? 🤔

The more dependent companies or even governments become of AI the more severe would be the consequence of cyberattacks or attacks on AI infrastructure.