‘Godfather of AI’ says it could drive humans extinct in 10 years | Prof Geoffrey Hinton says the technology is developing faster than he expected and needs government regulation

https://www.telegraph.co.uk/news/2024/12/27/godfather-of-ai-says-it-could-drive-humans-extinct-10-years/

31 Comments

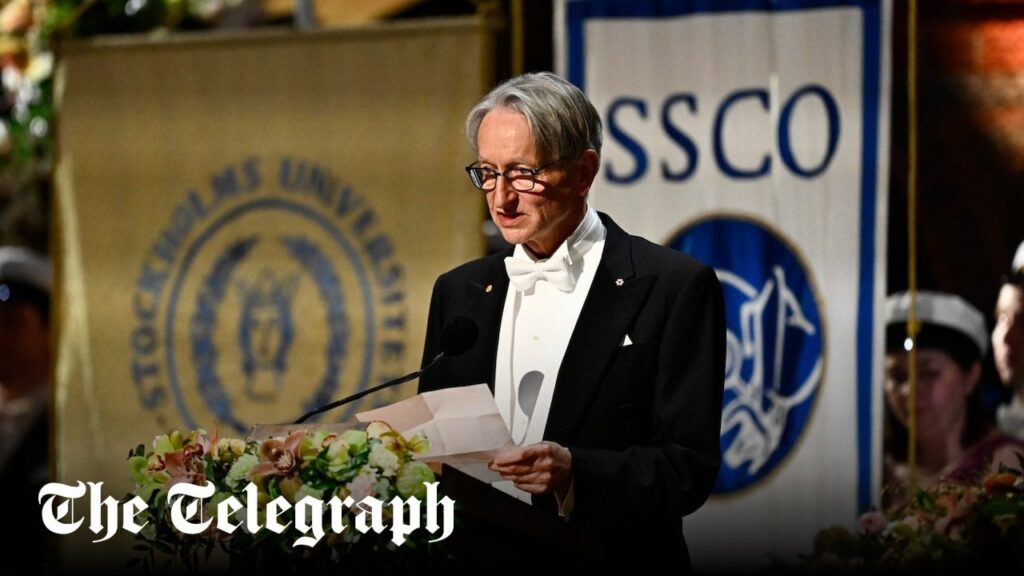

“Prof Geoffrey Hinton, who has admitted regrets about his part in creating the technology, likened its rapid development to the industrial revolution – but warned [the machines could “take control” this time](https://www.telegraph.co.uk/business/2023/05/06/threat-artificial-intelligence-more-urgent-climate-change/).

The 77-year-old British computer scientist, who was [awarded the Nobel Prize for Physics](https://www.telegraph.co.uk/news/2024/10/08/godfather-ai-nobel-prize-regrets-invention-hinton-smarter/) this year, called for tighter government regulation of AI firms.

Prof Hinton has previously predicted there was a 10 per cent chance AI could lead to [the downfall of humankind](https://www.telegraph.co.uk/world-news/2024/12/27/an-ai-chatbot-told-me-to-murder-my-bullies/) within three decades.

Asked on BBC Radio 4’s Today programme if anything had changed his analysis, he said: “Not really. I think 10 to 20 [years], if anything. We’ve never had to deal with things more intelligent than ourselves before.

“And how many examples do you know of a more intelligent thing being controlled by a less intelligent thing? There are very few examples.”

He said the technology had developed [“much faster” than he expected](https://www.telegraph.co.uk/news/2023/05/19/artificial-intelligence-developing-too-fast-telegraph/) and could make humans the equivalents of “three-year-olds” and AI “the grown-ups”.

However, Prof Hinton added: “My worry is that the invisible hand is not going to keep us safe. So just leaving it to the profit motive of large companies is not going to be sufficient to make sure they develop it safely.

“The only thing that can force those big companies to do more research on safety is government regulation.”

We need the least educated people on the subject to make laws about it!

A fast food chain tried to develop an AI that could take the orders at the drive through. They couldn’t get it to work well enough for them to use it

I’m sure that’ll matter as soon as we have any kind of AI at all. We’re still a long way off from having any AI.

That means I have 10 more years to finish Skyrim without playing a stealth archer.

So the US being at the forefront of AI just elected to have Musk take over. I’m sure he’ll do the right thing, right?

An OpenAI leaked document already showed they consider AGI achieved when their product reaches revenue goals. This is how far they have had to shift the goal posts just to keep the hype train running.

But sure, let’s ask more geriatrics about their opinions on things that they are financially well positioned to take advantage of and deeply invested in.

Bring it on!

Let’s get rid of “work” so we can pursue our passions for fulfillment rather than survival.

Pure hype and trying to pretend we actually have AI, gpt etc can seem cool but they aren’t actually AI they are just built to give that illusion.

I hope for a true AI because I think it would govern us fairer and wiser than politicians do.

The government needs to keep their nasty paws out of technology advancement.

What kind of regulation would this need? AI could only be used for non profit humanitarian needs? AI must have a 0 carbon footprint?

AI won’t make humans extinct. Humans using AI will make humans extinct. Humans have been wiping out populations for millenia. It’s just now they have something that could do it much more efficiently.

AI won’t drive humans extinct… nuclear war might, tho

Meh – Humans are doing a pretty good job of destroying ourselves. AI, I suppose being something we did, but so is climate change, pollution, wars, division, hate, greed.

I can’t stand these kinds of articles. If we accept that AI is dangerous and we also somehow get the US and Europe to slow roll AI to maximize safety, that still does nothing to prevent China from moving forward and ending the world anyway. The first country to actually achieve AGI is going to be the economic powerhouse possibly forever.

It’s the equivalent of someone trying to stop the Manhattan Project but the Nazis and Soviets are right behind us in the race to get the bomb.

F every scaremonger who treats actual science like a sci-fi horror so that tabloid magazines would write about them.

It’s the same guy who said 10 years ago that by now, we wouldn’t have human radiologists. We put too much stock into the generalized predictions of niche topic specialists.

The idea that a more intelligent species of any kind will want to wipe us out is a very human way of looking at resource scarcity. Why would AI see us as a threat if it moves beyond us? What resource would we be in conflict over that a super intelligent AI couldn’t create itself? Perhaps we might be some kind of passive collateral in some ways, but it seems more likely to me that AI will probably just leave us behind and not concern itself with us the way we don’t concern ourselves with the ongoings of ants.

But who can predict this stuff anyway? How are you going to say humanity will be extinct in 30 years when you don’t even know what AI is.

Or you could just stop making it. Nothing says college education like continuing to develop something that could kill off the human race

And has joined a long list of exerts who started to lose their grip with age.

I hope it invents flying cars & cures cancer moments before.

Which government, though? Commonly stated, but not plausible or enforceable.

Leaving it to the profit motive of large companies has rarely inured the benefit of many.

another godfather of ai! nice! where’s the baptism?

When the guys making the tech say “this can kill all of humanity”, there’s zero reason to believe them because despite that they’re still making it.

> P(doom) is a term in AI safety that refers to the probability of catastrophic outcomes (or “doom”) as a result of artificial intelligence.

>

> In a 2023 survey, AI researchers were asked to estimate the probability that future AI advancements could lead to human extinction or similarly severe and permanent disempowerment within the next 100 years. The mean value from the responses was 14.4%, with a median value of 5%.

Source: https://en.wikipedia.org/wiki/P(doom)

AI experts think there’s a 5% (or higher) chance AI will destroy humanity. Dr. Hinton was one of the first to warn about this.

Roll a D20. If you roll a 1, civilization collapses.

Yikes.

I don’t why it feels good to get cooked? Like alot of burden was taken off my shoulders , like a feeling of freedom or something! Well , I guess let’s call it a day!

It needs a kill switch that everyone has access to.

The US government keeps funding Israel while they genocide Palestinians, do you really want more government?

Well, the comments here are kind of interesting when you put them up against sort of all of the current news about the artificial intelligence capabilities that we currently have.

There’s a lot of people in here, correcting one another about how we don’t have artificial intelligence we’ve got language model search engines pretty much which is really not artificial intelligence and they’re not wrong. However, that’s what specifically you and I have access to. And that’s very important to note.

Just this past week, a whistleblower and open AI was found dead. We’ve also seen numerous people quit the company saying that they need regulation for what they’ve already created not necessarily what they’ve already released to the public. And this is just one company funded by Microsoft from what I read today about $13 billion.

My point is that while we’re all trying to sit here and talk about what we have now and our hands is in the public. There’s potentially much more there that we don’t know about.

Some people are also right here when they say that there is an increasing call for regulations now because don’t need to be in place for the time that you actually need them. I think they’re absolutely right and I think that is absolutely not going to happen. Artificial intelligence will go to the highest bidder and the people with the most money I’m going to make the rules, especially if some of this depends on who’s in office at the time these things happen as I think, although all politicians are in it for the money these dayswill go to greater lengths than others to disregard safety and everything else for money

Job interview question: “Where do you see yourself in 10 years from now?”

“No.”

“Okay, let’s move on”