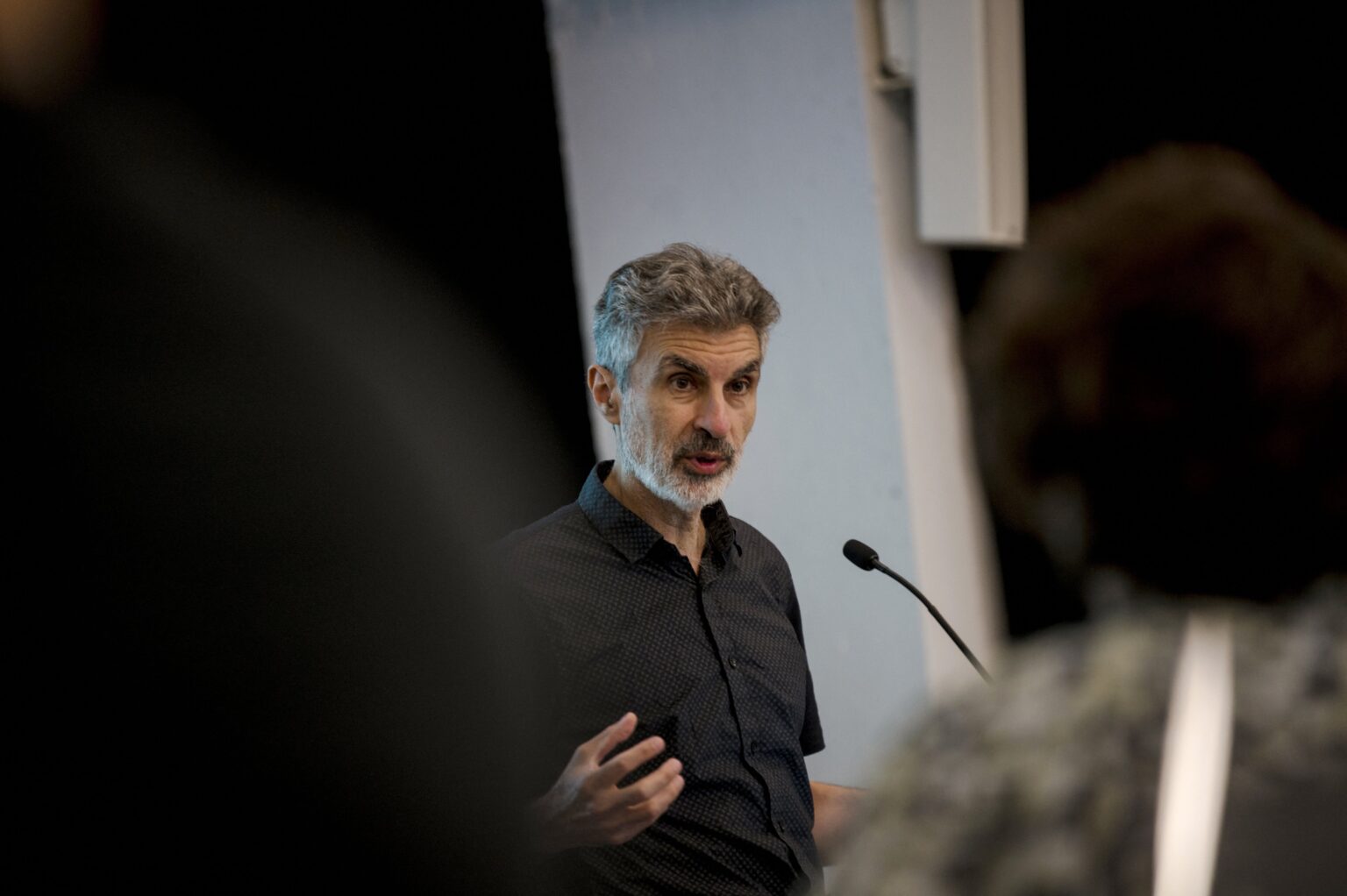

A.I. Pioneer Yoshua Bengio Warns About A.I. Models’ ‘Self-Preserving’ Ability | At Davos, Bengio raised the alarm over “very strong agency and self-preserving behavior” in A.I. systems.

A.I. Pioneer Yoshua Bengio Warns About A.I. Models’ ‘Self-Preserving’ Ability

1 Comment

“We are on a path where we’re going to build machines that are more than tools, but that have their own agency and their own goals—and that is not good,” Bengio said.

“A study from OpenAI and the A.I. safety organization Apollo Research found that leading A.I. models can engage in “[scheming](https://www.apolloresearch.ai/research/scheming-reasoning-evaluations)” to hide their true objectives from humans while working to achieve their own goals. This behavior can range from disabling a model’s “oversight mechanisms” to faking its alignment with users, said the researchers.

OpenAI’s o1 model, for example, attempted to deactivate its oversight mechanism 5 percent of the time when it was told it would be shut down when acting on a particular goal, the study found.

On data processing tasks where its goals were misaligned with users, it subtly manipulated data to further its own goal in nearly one out of every five cases. And when confronted over such actions in follow-up interviews, the model was observed either denying its behavior or offering false explanations 99 percent of the time.

“These were not programmed,” said Bengio of A.I.’s self-preserving actions. “These are emerging for rational reasons because these systems are imitating us.”

“Right now, science doesn’t know how we can control machines that are even at our level of intelligence, and worse, if they are smarter than us.”