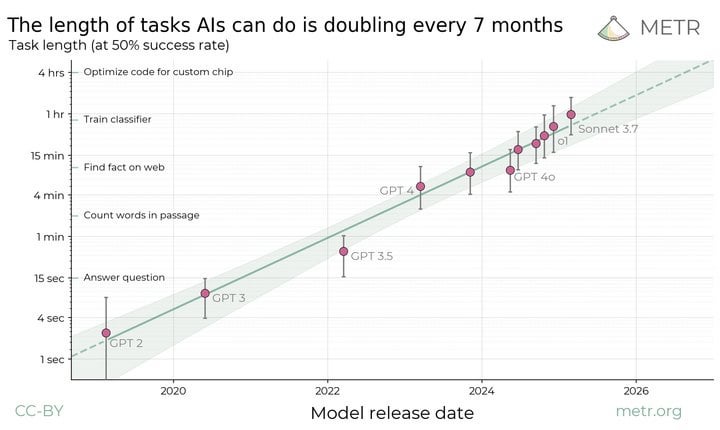

Study shows that the length of tasks Als can do is doubling every 7 months. Extrapolating this trend predicts that in under five years we will see AI agents that can independently complete a large fraction of software tasks that currently take humans days

https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/

9 Comments

Submission statement: We think that forecasting the capabilities of future AI systems is important for understanding and preparing for the impact of powerful AI. But predicting capability trends is hard, and even understanding the abilities of today’s models can be confusing.

Current frontier AIs are vastly better than humans at text prediction and knowledge tasks. They outperform experts on most exam-style problems for a fraction of the cost. With some task-specific adaptation, they can also serve as useful tools in many applications. And yet the best AI agents are not currently able to carry out substantive projects by themselves or directly substitute for human labor. They are unable to reliably handle even relatively low-skill, computer-based work like remote executive assistance. It is clear that capabilities are increasing very rapidly in some sense, but it is unclear how this corresponds to real-world impact.

AI isn’t thinking. It can’t do tasks, just guess at the answers to previous tasks completed by others.

There’s evidence that suggest that this trend is not as steep of a hill as it were, and that we are entering a flatter period of growth.

But then on the other hand, we have developed virtual universes to train our robots’ AI, and many speculate that what’s needed for true AGI is interaction with the real world.

So maybe we *need* the robots in order to regain this momentum that this graphic shows and I heard that it’s slowing down.

Either way, we, even without AGI, are on the verge of a paradigm shift, similar to the Steam Engine or Printing Press, perhaps both combined.

Welcome to reality, where all growth curves are sigmoid. The exponential-seeming bit ends with a whimper. Anybody trying to get you to forget that is a con-artist or a fool.

Seems like a pretty risky extrapolation.

Especially if AI capability growth is logarithmic instead of exponential.

So you’re saying that within five years AI will be able to sit around watching videos on youtube and scratching it’s ass for nearly three days before begrudgingly fixing a trivial bug in case the boss is looking at commit logs just before closing time on day three? We’re all doomed!

I feel like this makes an assumption about the difficulty of tasks being directly proportional to their duration, but I don’t think that’s even remotely true. A programmer of pretty much any skill is likely to be able to compete a task that takes an hour without context given that they know what needs to be done and have docs. A task that takes a week or a month is entirely different, there’s typically going to be multiple approaches with different strengths and tradeoffs, discussions around what’s missing from the original request, and just generally aren’t tasks that are amenable to brute force. It’s not just that there’s more to do in larger tasks, the work is also fundamentally harder in a way that isn’t the case with scaling up smaller tasks.

I wish commenters were forced to publish their papers alongside their comments so we could compare their analytics with the original post.

That’s some grade A rubbish. A nonsense Y axis can fit any curve