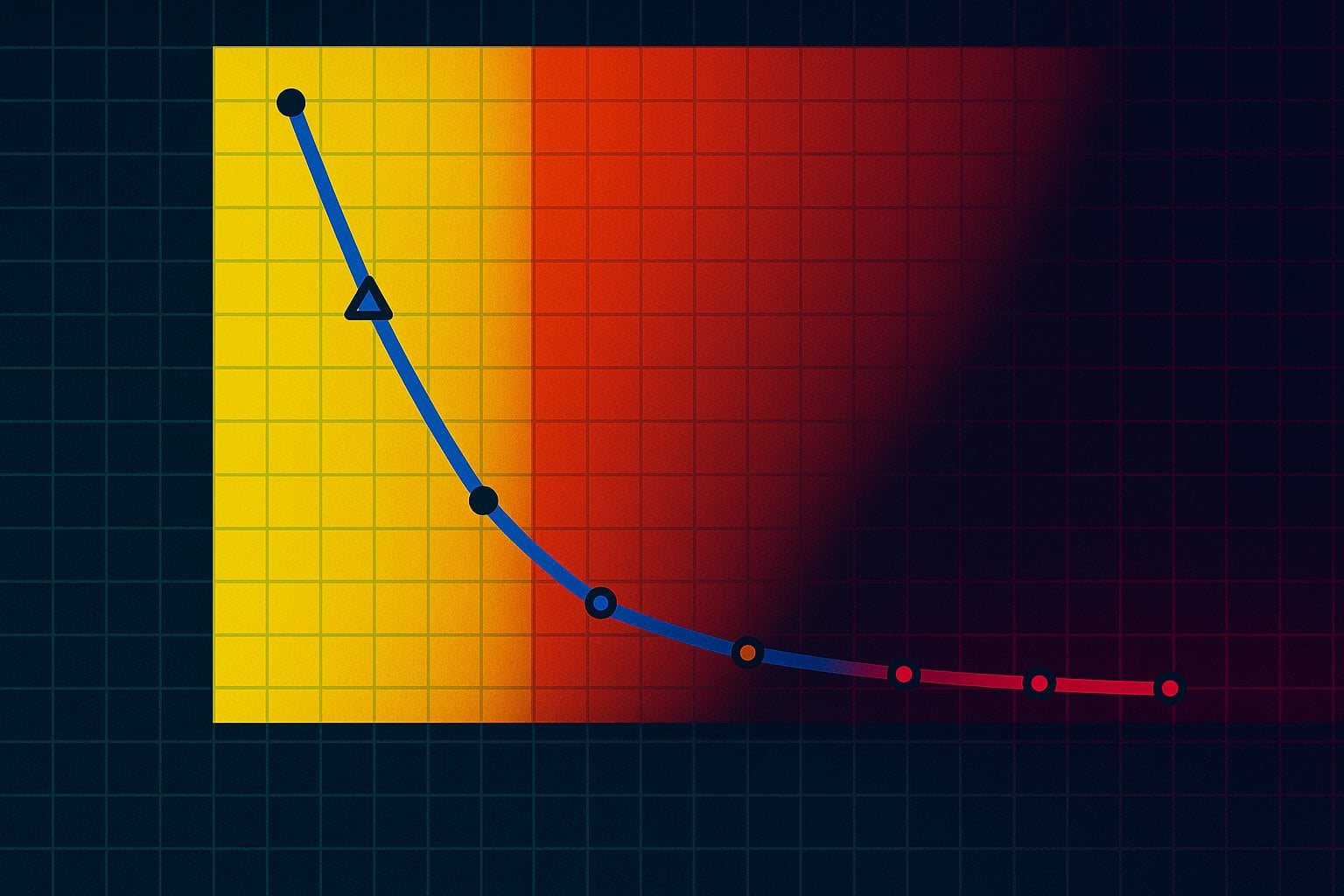

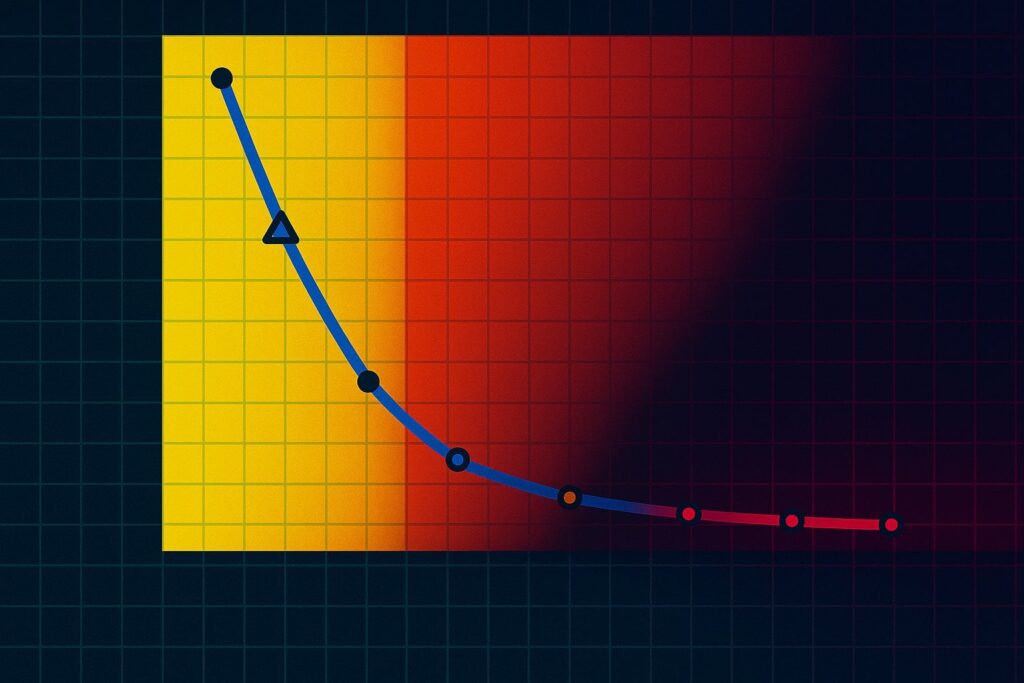

Researchers tested Large Reasoning Models on various puzzles. As the puzzles got more difficult the AIs failed more, until at a certain point they all failed completely.

Even without the ability to reason, current AI will still be revolutionary. It can get us to Level 4 self-driving, and outperform doctors, and many other professionals in their work. It should make humanoid robots capable of much physical work.

Still, this research suggests the current approach to AI will not lead to AGI, no matter how much training and scaling you try. That's a problem for the people throwing hundreds of billions of dollars at this approach, hoping it will pay off with a new AGI Tech Unicorn to rival Google or Meta in revenues.

Apple study finds "a fundamental scaling limitation" in reasoning models' thinking abilities

New research from Apple suggests current approaches to AI development are unlikely to lead to AGI.

byu/lughnasadh inFuturology

21 Comments

I think it depends on the definition of AGI. I would argue thatLLMs are already generally intelligent across all fields.

I don’t think anyone researching AGI thought LLMs were the path. That’s just blowhard Silicon Valley investment speech

> Even without the ability to reason, current AI will still be revolutionary. It can get us to Level 4 self-driving, and outperform doctors, and many other professionals in their work. It should make humanoid robots capable of much physical work.

This is an important point. Yes, current AI are dead ends, but even if they stop improving tomorrow, what we have right now is already helping a lot.

> Still, this research suggests the current approach to AI will not lead to AGI, no matter how much training and scaling you try. That’s a problem for the people throwing hundreds of billions of dollars at this approach, hoping it will pay off with a new AGI Tech Unicorn to rival Google or Meta in revenues.

And this is according to Apple themselves, not some random tech bro…

r/ singularity in shambles once again lmao. (The same sub that mass banned me and a lot of other people for daring to have dissenting / realistic opinions).

Research from apple who is currently behind when it comes to ai? Well kinda classic approach from them.

Is there a conflict of interest here?

Of course Apple, who are lamentably losing the AI race despite crazy investments are now pumping studies telling people it’s not the way to go

That’s not at all what this suggests. It’s like that articles went to media misinterpretation like 10 times. There is zero actual fundamental limitation of LLMs to my knowledge.

Here’s the thing numbskull developers don’t get.

We won’t be the ones to give it AGI. We cannot create something greater than us.

It will do it itself at some point, and we may not even be able to detect it.

Big part is that these models are built and designed by a very small subsection of an already small subsection of people. The frame of scope is narrow while the resource consumption is massive.

You mean life and intelligence is more than just a giant linear algebra equation!? Surprised pikachu face!

I still don’t see why we need AGI. I don’t think we are capable or responsible enough to create God. Let’s keep it at this level, perfect it, get UBI, see how we work as a civilization when we can devote our time and talent to our passions.

As you would if you weren’t in the lead on this. “A business that’s behind pours scorn on innovation”

I for one, would be happy to see apple take a big L on this.

So many people have said this but it hasn’t stopped this sub from posting countless times about how every job will be taken over by AI and they’ll have us all enslaved within a few years.

Of cause it’s unlikely, considering I you’re nerfing them all the time.

They needed research for that ?

The monkey see monkey do approach cannot do anything the monkey doesn’t see…

Do we actually want AGI, or just smart systems that can help us without taking over the world..

No, they say LLMs are an unreliable execution engine on long reasoning problems. Allow them to e.g. write a script first or cache their work as they go to persistent memory and you’ve got much more scalable reasoning

LLMs alone aren’t AGI, but we always knew that. LLMs in a loop with some memory and finetuning controls and the limit is still unknown. This paper says nothing about the interesting stuff

Our computer technology just isn’t anywhere near powerful enough for us to develop AGI. It is decades away.

Research about AGI from a company that is notoriously a few years behind everyone else. 🙄

They don’t even have a **basic** LLM FFS.

It is interesting that apple is the one pushing this narative, they are the only one of meta, google, microsoft that hasn’t gone fully in on ai and llm investment.

Apple are hardly experts on this. Siri Apple AI failed. Aren’t they using Anthropic now?

There is no one in the field surprised by this. There is a question of what more access, memory, and contextual analysis could do for LLMs. Would adding “truth” sources let them build on existing information better? Can we train them to explain the process in a format to facilitate “doing” things?

There is always more room to improve, but an LLM doesn’t tend to be set up to learn as it goes. We need adaptive and evolution capable.