Humanity has no strong protection against AI, experts warn | Ahead of second safety conference, tech companies accused of having little understanding of how their systems actually work

https://www.thetimes.co.uk/article/world-has-no-strong-protections-against-ai-experts-warns-mwb9q6f77

2 Comments

“A panel of 75 experts from 30 countries has also concluded that developers building AI systems “understand little about how their systems operate” and scientific knowledge is “very limited”.

They are critical of AI companies for failing to provide enough access to safety inspectors.

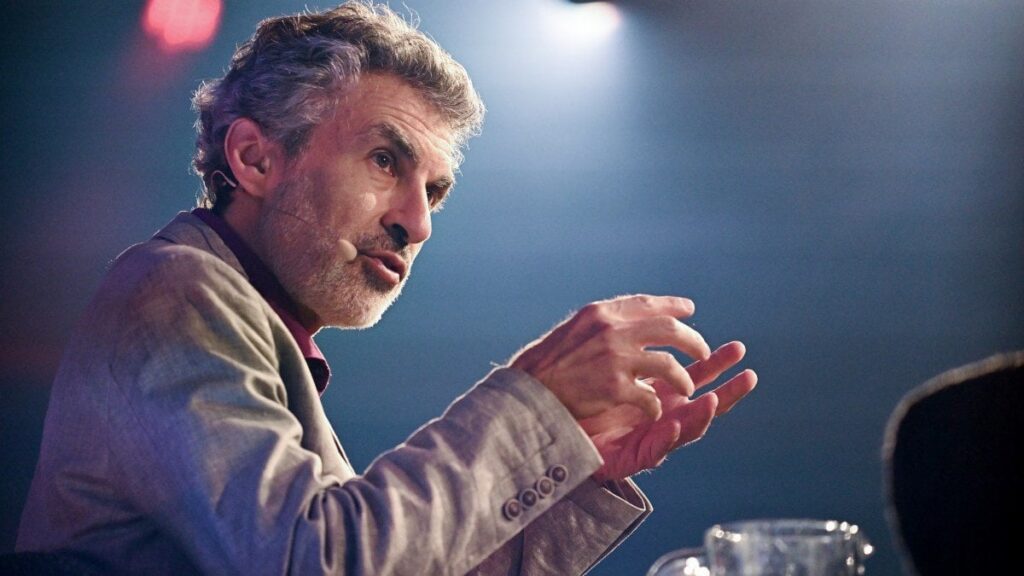

“Yoshua Bengio, one of the “godfathers of AI” who chaired the panel, said he was “concerned” about its conclusions and the lack of understanding about the technology.”

“The experts said there are “competitive incentives for AI developers to release products quickly, potentially at the cost of thorough risk management”.

Andrea Miotti, executive director of the campaign group ControlAI, said: “The report is resoundingly clear that AI could pose extreme risks to humanity, including loss of control to powerful systems even while they are causing harm.

“This echoes the scientific consensus that AI indeed poses these risks, including the extinction of humanity, a fact worryingly acknowledged not only by the top scientists in the field and world leaders, but also the CEOs of the major AI companies. It is high time for governments to take action, and protect global security.”

Anyone care explaining to me how it’s possible that you don’t understand how your tech/code works at this stage?

I get that it might be more difficult to track once you give full autonomy to AI programs, but we are not there yet, are we?