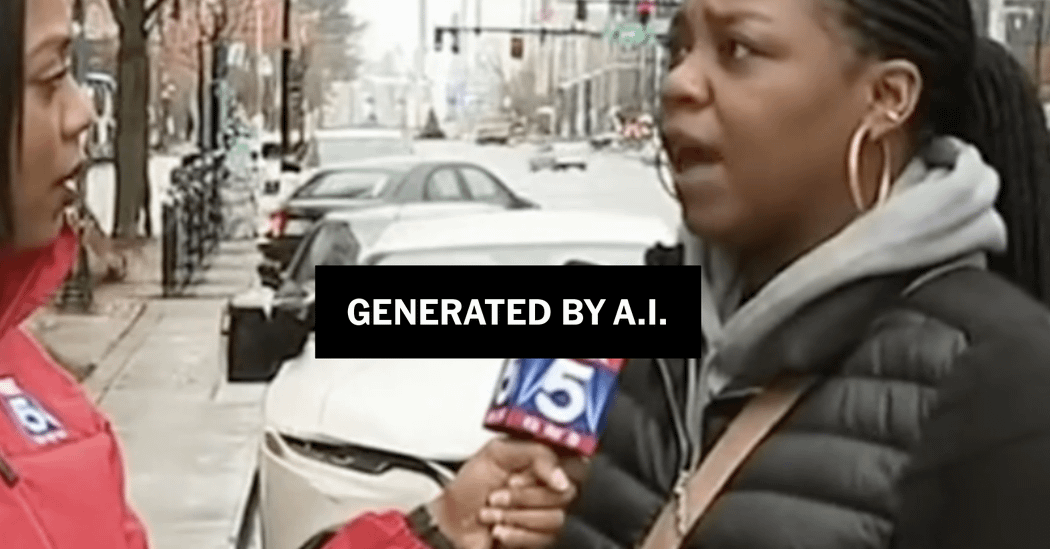

A.I. Videos Have Flooded Social Media. No One Was Ready. | Apps like OpenAI’s Sora are fooling millions of users into thinking A.I. videos are real, even when they include warning labels.

https://www.nytimes.com/2025/12/08/technology/ai-slop-sora-social-media.html

15 Comments

“In the two months since Sora arrived, deceptive videos have surged on TikTok, X, YouTube, Facebook and Instagram, according to experts who track them. The deluge has raised alarm over a new generation of disinformation and fakes.

While many videos are silly memes or cute but fake images of babies and pets, others are meant to stoke the kind of vitriol that often characterizes political debate online. They have already figured in foreign influence operations, like Russia’s ongoing campaign to denigrate Ukraine.

Researchers who have tracked deceptive uses said the onus was now on companies to do more to ensure people know what is real and what isn’t.”

Photos and videos need to be signed by the author with a cryptographic key and a social trust graph needs to be built – it’s not reasonable to ask users to try to discern if something is real or fake by looking at the content. Social web apps could easily do this – why don’t they?

This technology, once it gets past a level of photorealism, needs to be locked behind a license and registration, just like a gun or a driver’s license. If you can ruin someone’s life or influence an election, there has to be real consequences.

This is not the same as photoshop. Or other technologies because of how easy and accessible it is now for anyone to alter our perception of reality.

I deleted my Facebook because of this, among other reasons. Half my feed was from stuff I didn’t follow and a surprising amount was AI. Friends were even sharing AI stuff and were surprised when having it pointed out.

I can only imagine it has gotten worse.

I treat photos and videos on social media as entertainment, so don’t mind whether they are AI or not.

I’m not looking to Facebook or Reddit to get hard news or opinion.

I don’t X, TikTok or Snapchat and Threads is a joke in terms of meaningful anything.

OP have you tried Tai Chi walking? You too can have the abs of a 20 year old bodybuilder at 65! All it takes is to be a creation of AI and to put a tiny, tiny disclaimer about this at the bottom of the screen whenever you appear in video.

Probably from the same kinds of people who believe things based on screenshots of headlines.

I don’t really watch videos or look at images…

I can’t believe how many people like picture books no wonder people lack literacy.

Warning labels are almost more dangerous as people will offload critical thinking onto the labels. Intentional misinformation generated by AI won’t include them and fool more people

Wont be long till all adverts are created this way. Trailers and possibly films, the Oscars will be a barrel of laugh.

News feeds too, I mean how are they going to vet anything, the next baddy to blow up a plane with a suitcase, put him in Iraq somewhere voice face everything who’s going to know any different.

Yeah I’ve noticed an uptick of my friends posting things they think are real, and while I think I still can see clues, I know it’s inevitable that I will be able to see the difference either. It’s a great motivation to disconnect at this point

Even in a professional setting, people don’t read labels. Even if it’s in big giant red letters plastered across the screen or page.

Now imagine in a casual setting where people are doom scrolling.

Maybe it’s unrelated, but I’ve been finding myself getting offline and reading books more lately.

I wrote a paper recently suggesting that we have already moved into a post-truth society mainly because we are not ready for what is here, and what is coming. For example, imagine a social media feed that, instead of recommending content, creates it—synthetic media produced in real time to mirror your beliefs and emotions. Some examples I give in there include:

**Personalised Content Dystopia**

One threat is the emergence of a personalised content dystopia. Platforms like TikTok and YouTube no longer just recommend content from real content creators based on your viewing history, but generate it eternally, just for you. Not just based on the last video you watched, but on all the data it has on you, the GenAI will create the next one, perfectly tailored to hit the right emotional or ideological notes to maximise engagement. This creates a feedback loop that amplifies extreme filter bubbles, eroding the possibility of a shared cultural or public experience.

**Hyper-Personalised Information Streams**

This extends beyond entertainment to all forms of information. Envision personal podcasts, generated on the fly to explain the day’s events, shaped precisely by your existing beliefs and known preferences. These bespoke realities create individual informational silos, where each person consumes a reality so uniquely crafted for them that the concept of a common set of objective facts begins to dissolve, making consensus and public discourse increasingly difficult.

**Blurring Authenticity**

GenAI’s ability to resurrect the past blurs the lines of creative authorship and authenticity. Consider the recent release of ‘Now and Then’, a Beatles song that used AI to isolate John Lennon’s voice from an old demo. This is just the beginning. Imagine a future where an AI, pointed at the entire Beatles catalogue (including voices, instruments, lyrics, etc.), releases a completely new album, complete with music videos and a worldwide hologram tour (already being done by Abba and Tupac was also brought back). While this raises questions about artistic legacy and what constitutes a genuine human creation, it also poses a risk to creative evolution. Why would a studio invest in a new, unproven artist when it can generate a guaranteed hit from a beloved, deceased star, or endlessly feature a bankable actor who has sold their likeness? This could lead to a homogenous cultural landscape dominated by familiar echoes, where the same artists are perpetually recycled, stifling the emergence of new and diverse voices.

You can read the preprint here if you are interested: https://papers.ssrn.com/abstract=5742002

2026 i’m done with reddit it’s the last social media i have, taking my life back