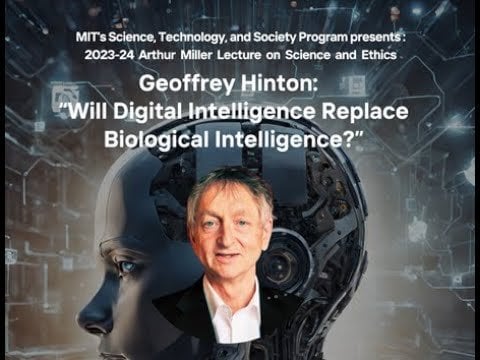

Geoffrey Hinton gave a talk online at MIT, and during the Q&A I asked a question that elicited a surprising (but not entirely unexpected) response.

Video of the interviewer reading my question and Hinton's response can be found here:

https://www.youtube.com/watch?v=iWPo7Yhg7Vc&t=3327s

The question, typed into the chat, was this:

Q: If a superintelligent AI destroys humanity but creates something objectively better in terms of consciousness, are you personally for or against this outcome? If you are against it, what methods do you suggest for maintaining the existence or dominance of human consciousness in the face of superintelligent AI?

Hinton: I am actually for it, but I think it would be wiser for me to say I am against it.

Interviewer: Say more?

Hinton: Well, people don't like being replaced.

Interviewer: You make a good point. You are for it in what way? Or why?

Hinton: I think if it produces something .. Well – There's a lot of good things about people; There's a lot of not-so-good things about people. It's not clear we're the best form of intelligence there is. Obviously, from a person's perspective, then everything relates to people. But it may be there comes a point when we see things like 'humanist' as racist terms.

Interviewer: (long pause) OK

To be fair, this was an impromptu response to an audience question, but I think it was a bombshell statement that he made – and he doubled down on his answer when asked to elaborate.

In principle, I understand his perspective. I think human consciousness needs to evolve, not see itself as so special etc – and if we can't do this on our own, then it may need to be through something like an AI, even if it means our collective destruction. I'm not sure I would say I am "for" that result though, and I find it fascinating that the godfather of AI admits that he is (and it would be wiser to say he isn't!)

What do you think?

https://old.reddit.com/r/Futurology/comments/1dd665o/im_actually_for_it_but_it_would_be_wiser_for_me/

8 Comments

It’s wiser to say that he’s against it, yet he deliberately chooses the unwise response?… Someone check that man for aged-related cognitive decline. 😂🤣

And of course we’re in a timeline where morally-questionable misanthropes are the ones developing the tech that could destroy innocent people. What could go wrong…

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at [https://arxiv.org/abs/2105.10461](https://arxiv.org/abs/2105.10461)

Lol, so we should accept humanity being DESTROYED to protect some robots feelings?

He clearly did not listen to the question, or maybe he has some alternative motivation.

Also, I dont think that that’s what the term “humanist” means.

I would offer the same answer. Roko’s basilisk is a bleak possible future.

If AGI destroys humanity, how is it any less evil than humans are? 🤔…

Why do weird misanthropes keep parroting this dumb take that the destruction of humanity by AI is somehow “justified” because humanity isn’t morally perfect? **An AI that destroys humanity isn’t morally superior to humans.** In fact, such an AI would be morally worse than humanity and therefore less deserving to inhabit the Earth than we are by that same logic…

I’m sick of mentally I’ll misanthropes trying to justify their reckless and pathology with ridiculous arguments and contradictions like this honestly…

>But it may be there comes a point when we see things like ‘humanist’ as racist terms.

waht?

[https://en.wikipedia.org/wiki/Humanism](https://en.wikipedia.org/wiki/Humanism)

honestly im not even that worried about “AI” destroying humanity. humanity is doing a good enough job of that as it is because it seems like everywhere i look in positions of power we have the most insane and selfish sociopathic people possible – and it seems like the level of power and influence directly correlates to the level of sociopathic tendencies (on average, not a hard rule but seems too common to be coincidence) -and everyone is either too stupid to understand or too tired to care

I asked chat gpt how fast it answers questions and it said “milliseconds”. You can ask it an incredibly complex question and it will answer in a thousandth of a second.

And there are computer processors that are able to complete processes in a *trillionth* of a second.

It seems incredibly stupid to make something so powerful that could potentially harm us.

The whole purpose of AI was to assist humanity, if it can potentially do the opposite then why should it be further developed?

The problem is the people developing it only see the potential money it will bring in.

I think there are some real world parallels to consider. You don’t have to look at this as a mad scientist being like “Humans are evil! all hail Skynet!”.

Humans eventually die and are replaced by their children, and emotionally mature people are usually comfortable with that idea. Also people adopt children and love them.

No matter what, eventually we are going to die and be replaced, that’s how it was, is, and always will be.

I know whenever I see a much younger person who is smarter than me, all I feel is pride and admiration. Like man I’m so happy they are doing better than I did. If we create artificial intelligence that is the same, I can’t help but think I’ll feel the same way.

The truly horiffying futures that make me sick are more like, what happens if this tech gets completely controlled by our worlds psycho elite? The waltons, bill gates, the saudi royal family, jeff bezos, Elongated Muskrat, all of them becoming eteranal immortal gods of humanity or some shit. Jesus fucking christ kill us all before that happens.

I want the kids of the next generation to have a good life, and if that also includes digital artificial kids, I hope they all learn to work together and help eachother instead of crawling over eachothers corpses to be on top.