Hi everyone,

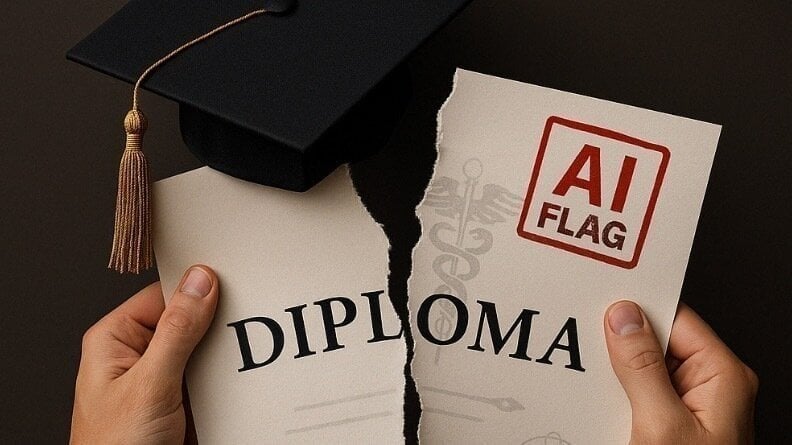

I wanted to share a real-world example of how premature deployment of AI tools is already causing serious harm.

At the University at Buffalo, students are being accused of academic dishonesty based solely on AI detection scores from Turnitin’s model, with no human review and no other evidence. Even Turnitin warns that their tool should not be used this way, but institutions are doing it anyway.

Graduations are being delayed, students are being punished, and there is no meaningful appeals process. It is a clear case of how bad AI deployment decisions, combined with institutional incentives, can cause real-world damage.

We have started a petition calling for UB to stop using AI detection as the sole basis for accusations. If you believe in responsible AI development and deployment, please consider signing or sharing.

Thank you for caring about the future we are building

Students Are Being Punished Based on Flawed AI Models. This Is the Future We Are Facing

byu/Kelspider-48 inFuturology

19 Comments

There have been cheat detection tooling in colleges for years, they were using some form of it back in the 2010’s when I was in college and it was error-prone then too. Academic laziness is the problem here, not AI tools. Tools to detect cheating will always exist and they will always be sold to academia. They did their part by telling them it’s not 100% accurate, the college is the one screwing up here.

so, what other evidence are you requesting?

Typically when dealing with someone dishonest you dont show your entire hand, you point out half of the problem and then see if they correct the entire problem, or only what they were “caught” out on.

Look – in truth we need to deal with the very real educational issue of school work products may need to come from and stay in the schools without the old cheat we used to do of homework. Homework itself may be outmoded.

Calculators used to be something that would be taken from students on certain tests and it wasn’t an accident.

It’s impossible to ask kids not to cheat when their friends may do it and get better scores than them (and lets be honest, Rich kids often just had tutors that performed the same function). But there is a very real thing here which is, in order to be smart enough to use a tool effectively, you have to have a base level of skill of what you’re doing to begin with. And by the way – it’s not like we’re not catching teachers cheating back with the same tools.

Kids can’t develop those faculties if they never are applying. You don’t get to calculus without arithmitic, algebra, trig, etc. If you’ve been faking your knowledge from the start, we can teach you the subject but you’re wasting your time. “The point” of many parts of school are to actually be hard, to force a child to develop competency they don’t have yet. Do most people need Calculus? No. But if you’ve forced yourself through it, the arithmetic/algebra of a majority of business is a non-issue.

I’d love an open discussion about how AI is going to disrupt the entire education field, as anyone can compete now.

I’d also argue that it needs to be embraced because it is truly an amazing tool for human growth.

In the meantime? take advantage while you can!

> Even Turnitin warns that their tool should not be used this way

We’re 25 years into using honor-based systems like this in Big Tech (“pretty please don’t do this or that”) and yet we still haven’t learned.

Making people check a tickbox that basically says “I promise pinky swear” and acting shocked when people break the toothless promise is stupid.

This issue predates AI. And broader than just students (criminal prosecution and determining sentencing/probation is another big one).

Read Weapons of Math Destruction, this sort of black box data algorithm model and the dangers of it with a lot of concrete examples is the point of the book.

This is one of the thing that should never happen with AI: it must be used as a tool by humans and not as a tool to substitute humans.

I can see teachers using AI to help them find the students cheating, they know them and AI adds more info to evaluate the issue.

But AI without human review to punish students? Dystopia!

Find your professor’s PhD thesis and feed it into the AI, see what happens 😂

The deeper question is still too uncomfortable for people to navigate.

How do you teach in this world, with the advances of AI of even today? How do you deal with what we will have even at the end of the year? Next year?

What is the _goal_ of this education? What is the outcome we are hoping for?

Even that misses the forest for the trees. What if all of the researchers ringing alarm bells are right, and we have AGI in a handful of years? What are we even doing now?

I suspect the rejection of this potential outcome is often done because many people don’t even want to face that future, and would rather pray that it never comes.

I wonder what needs to happen before the majority of the world (including me!) is even able to comprehend some of the ramifications, let alone… Do anything about it.

Run the professors’ papers through it and see what comes out.

I’ve also noticed a few journalistic articles that source ai. Which is all fine and good. However, when you posit a question and your question is leading to a presupposed conclusion, ai spits out data thst fits your narrative. This isn’t real journalism, it’s full bias. It’s also lazy because the person isn’t even doing the research.

I got flagged by one of these models back in 2008, but I went before a 3-student panel to judge whether I had plagiarized or not. The assignment flagged was a 300-word essay on why I was taking an English class that I spent all of 10 minutes on, so it’s not surprising that I used some common phrases. I brought examples of my previous writing and the panel eventually agreed that not only was I not guilty, but that it was stupid that my professor submitted these assignments to be scanned by the software.

If I had not had human judges, though, I would have been screwed.

It is nothing knew. Lazy lectures will always find a way to finish grading quickly even if it means less accurate grades. 2 examples from my life:

We had a coding test with a few tasks. The results were based only on the final number. You used wrong method but get a correct results? All points. You wrote almost perfect program (e.g. you used wrong constant): 0 points, even though if one would code without timelimit and stress, the error would be likely corrected in peer review or with unit tests.

We had an exam where lecturer gave us 3 questions for 15 minutes and there was a lot information to learn. 3 short questions introduced a lot unnecessary variance.

AI tools will be no different. Some people will use the correctly, some people will use them incorrectly.

This is it. This is the moment we’ve all been waiting for… THE RETURN OF PRINTED MEDIA!

Students should be allowed to check their homework doesn’t fail the AI test because there are so many false positives but the AI companies like Turnitin don’t want that because it shows students how to avoid being accused of using AI and they make money from the universities.

I don’t think it’s unreasonable to use AI based detection systems in this context. What’s ridiculous is the lack of human oversight and a functional appeals process. Way too many executives and other people in leadership positions are very ignorant of the realities of AI, but very confident in its abilities.

I’m saying it now; the future is handwritten essays, in class, with no screens allowed.

Relevant book: Weapons of Math Destrucción (Kathy O’neil)

This is mostly an American problem, because American systems are dumb and they don’t respect student rights. In Sweden for example this would be illegal and teachers would be reported for making accusations without proof. And according to all available information, AI detectors aren’t proof. So it’s a very simple issue and just down to human error and lack of respect for due process.